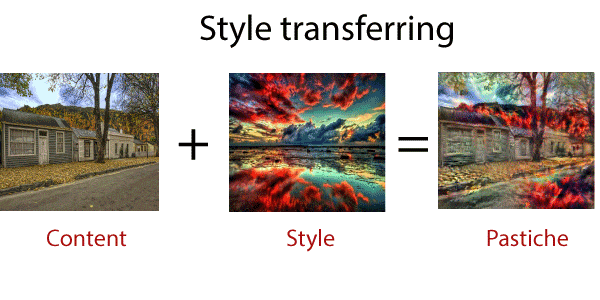

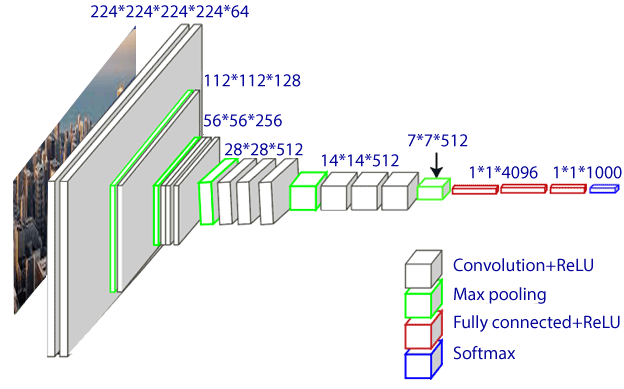

Style Transferring in TensorFlowNeural Style Transfer (NST) refers as a class of software algorithm manipulate digital images, or videos, or adopt the appearance or visual style of another image. When we implement the algorithm, we define two distances; one for the content (Dc) and another for the form (Ds). In the topic, we will implement an artificial system based on Deep Neural Network, which will create images of high perceptual quality. The system will use neural representation to separate, recombine content-image (a style image) as input, and returns the content image as it is printed using the artistic style of the style image. Neural style transfer is an optimization technique mainly used to take two images- a content image and a style reference image and blend them. So, the output image looks like the content image to match the content statistics of the content image and style statistics of the style reference image. These statistics are derived from the images using a convolutional network.  Working of the neural style transfer algorithmWhen we implement the given algorithm, we define two distances; one for the style (Ds) and the other for the content (Dc). Dc measures the different the content is between two images, and Ds measures the different the style is between two images. We get the third image as an input and transform it into both minimize its content-distance with content-image and its style-distance with the style-image. Libraries RequiredVGG-19 modelVGG-19 model is similar to the VGG-16 model. Simonyan and Zisserman introduced the VGG model. VGG-19 is trained on more than a million images from ImageNet database. This model has 19 layers of the deep neural network, which can classify the images into 1000 object categories.  High-level architectureNeural style transfer uses a pertained convolution neural network. Then define a loss function which blends two images absolutely to create visually appealing art, NST defines the following inputs:

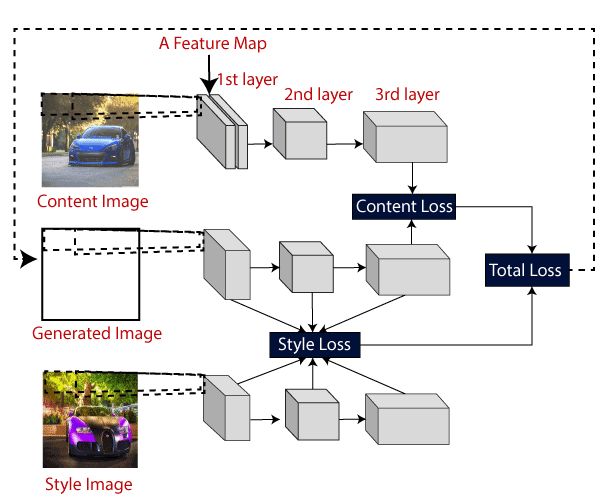

The architecture of the model is same, as well as the loss, which is computed, is shown below. We do not need to develop a profound understanding of what is going on in the image below, as we will see each component in detail in the next several sections to come. The idea is to give a high-level of understanding of the workflow taking place style transfer.  Downloading and loading the pertained VGG-16We will be borrowing the VGG-16 weights from this webpage. We will need to download the vgg16_weights.npz file and replace that in a folder called vgg in our project home directory. We will only be needing the convolution and the pooling layers. Explicitly, we will be loading the first seven convolutional layers to be used as the NST network. We can do this using the load_weights(...) function given in the notebook. Note: We have to try more layers. But beware of the memory limitations of our CPU and GPU.Define the functions to build the style transfer networkWe define several functions that will help us later to fully define the computational graph of the CNN given an input. Creating TensorFlow variables We loaded the numpy arrays into TensorFlow variables. We are creating following variables:

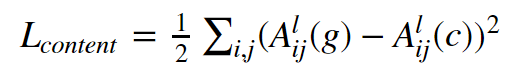

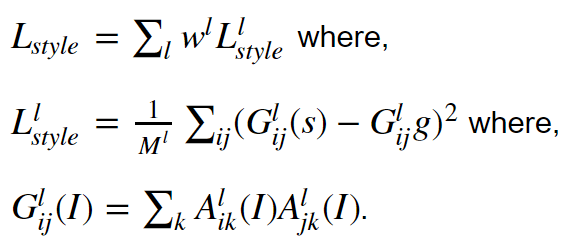

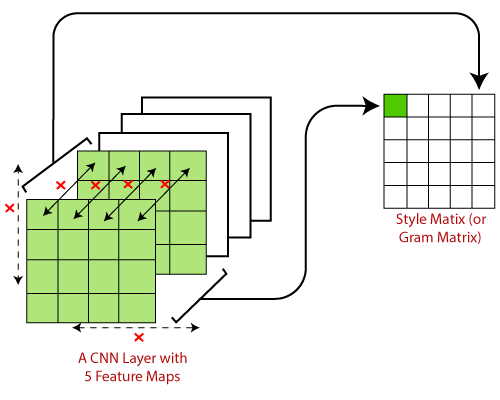

Make sure we leave the generated image trainable while keeping pretrained weights and weights and biases frozen. We show two functions to define input and neural network weight. Computing the VGG net outputLoss functionsIn the section, we define two loss functions; the style loss function and the content function. The content loss function ensures that the activation of the higher layer is similar between the generated image and the content image. Content cost functionThe content cost function is sure that the content present in the content image is captured into the generated image. It has been found that CNN captures information about the content in the higher levels, where the lower levels are more focused on single-pixel values. Let A^l_{ij}(I) is the activation of the lth layer, ith feature map, and j th position achieve using the image I. Then the content loss is defined as  The intuition behind the content lossIf we visualize what is learned by a neural network, there's evidence that suggests that different features maps in higher layers are activated in the presence of various objects. So if two images have the same content, they have similar activations in the top tiers. We define the content cost as follows. Style Loss functionIt define the style loss function which desires more work. To derive the style information from the VGG network, we will use full layers of CNN. Style information is measured the amount of correlation present between feature maps in a layer. Mathematically, the style loss is defined as,  Intuition behind the style lossBy the above equation system the idea is simple. The main goal is to compute a style matrix for the originated image and the style image. Then, the style loss is defined as a root mean square difference between the two styles matrices.  Next TopicGram Matrix in Style Transferring |